An AI hiring tool used by a major tech company was discovered to systematically downgrade resumes that contained the word "women's" — as in "women's chess club" or "women's engineering society." The system wasn't programmed to discriminate. It learned to, from a decade of hiring data where men were overwhelmingly selected. The AI found the pattern and replicated it, efficiently and at scale.

That story — Amazon's recruiting tool, since discontinued — captures the core ethics problem with AI better than any philosophical argument. The technology isn't biased in the way humans are biased. It doesn't have beliefs or prejudices. It has training data, and if that data reflects historical inequality, the AI reproduces that inequality with mathematical precision.

Bias Isn't a Bug — It's a Feature of the Data

We tend to think of AI bias as a technical problem with a technical solution: fix the algorithm, remove the bias. This framing is dangerously incomplete. The bias isn't in the algorithm. It's in the world the algorithm learns from.

A facial recognition system trained primarily on light-skinned faces performs poorly on dark-skinned faces. The algorithm isn't racist. It's undertrained — and the undertrained categories map directly onto social inequalities that determine whose faces get photographed, catalogued, and used as training data. The technical fix (more diverse training data) addresses the symptom. The root cause is a society that systematically creates more data about some people than others.

In healthcare, diagnostic AI trained on data from well-resourced hospitals performs worse for conditions common in underserved communities. The data gap reflects a healthcare gap, and the AI inherits both. A dermatology AI that's 95% accurate on light skin conditions but 60% accurate on dark skin conditions isn't just a bad algorithm — it's a mirror reflecting which patients historically received attention and documentation.

In my view, the most honest thing we can say about AI bias is that it forces us to confront inequalities we'd prefer to ignore. When a human doctor makes a biased diagnosis, it's one patient, one moment, hard to detect. When an AI makes a biased diagnosis at scale, the pattern is visible, measurable, and undeniable. The AI didn't create the problem. It made the problem impossible to overlook.

The Privacy Trade-Off Nobody Reads

Every AI service you use improves by processing your data. Your queries, your preferences, your writing style, your purchasing patterns — each interaction is a training signal. Not always directly (many companies anonymize and aggregate), but the fundamental exchange is: you get a better product, the company gets your behavioral data.

The privacy policies describing this exchange are, on average, 4,000-6,000 words long — roughly the length of a short story — and written in legal language that requires specialized knowledge to fully parse. Studies show that fewer than 10% of users read them. The remaining 90% consent to terms they haven't read, to data practices they don't understand, for products that get better precisely because so many people are willing to not ask questions.

I'm not sure this constitutes informed consent in any meaningful sense. Tapping "I agree" on a document you didn't read because you need the product and the alternative is not using it — that's a power dynamic, not a choice.

The Framework We Need

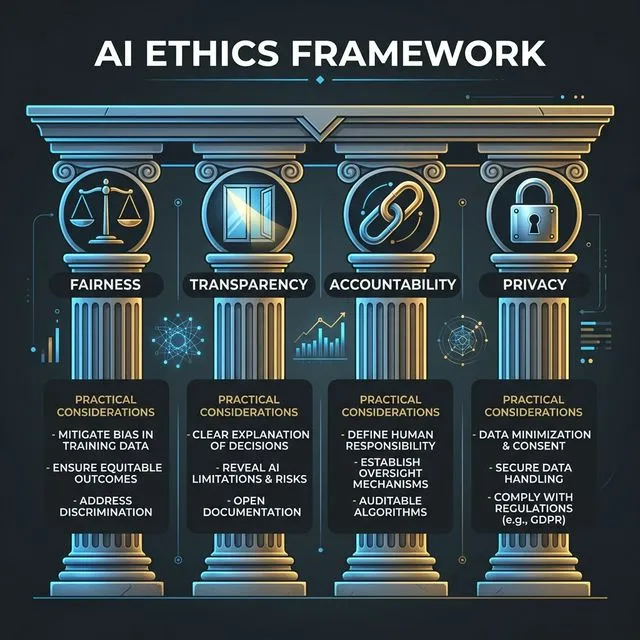

After spending considerable time reading AI ethics proposals from governments, corporations, and academic institutions, I've noticed they mostly converge on four principles — but differ dramatically on implementation:

Fairness: AI systems should not discriminate based on protected characteristics. In practice, this is harder than it sounds because fairness itself has multiple technical definitions that sometimes conflict. A system can be fair by one metric (equal accuracy across groups) and unfair by another (equal false-positive rates across groups). Which definition of fairness to optimize for is a moral choice, not a technical one.

Transparency: Users should understand how AI decisions affecting them are made. But deep learning models are inherently opaque — even their creators can't fully explain why a specific output was produced. "Explainable AI" is an active research area, but current explanations are often approximations, not true accounts of the model's reasoning.

Accountability: When an AI system causes harm, someone must be responsible. But "someone" is genuinely unclear. The company that built the model? The company that deployed it? The user who relied on it? The data providers? The chain of causation in AI systems is long and diffuse, and current legal frameworks weren't designed for it.

Privacy: Personal data should be protected. But AI needs data to function, and the most useful AI systems need the most data. The tension between privacy and capability is real, structural, and not easily resolvable through better encryption or anonymization alone.

What I Think Matters Most

After all the reading, thinking, and discussions I've had about AI ethics, the principle I keep returning to is: the people affected by AI decisions should have meaningful input into how those systems are designed and deployed. Not consulted after the fact. Not represented by proxies. Actually involved.

A hiring AI should be built with input from job seekers, not just HR departments. A healthcare AI should be developed with patient advocates, not just hospital administrators. A criminal justice AI should involve communities subjected to its predictions, not just police departments.

This isn't happening at scale. The people building AI systems are predominantly technically trained, economically privileged, and geographically concentrated in a few cities. The people most affected by AI systems are often none of these things. Bridging that gap — not just technically, but politically and socially — is the ethics challenge that matters most, and the one we're making the least progress on.

Comments (0)

Be the first to share your thoughts on this article.